How to Build a Proposal Library That Makes Every New Proposal Faster

A consulting proposal library is the highest-leverage asset a solo consultant can build from work already completed.

KEY TAKEAWAYS

- Every proposal a solo consultant has sent is a reusable data asset — most are buried in sent-mail, generating zero return.

- A proposal library converts past wins into future speed; a solo consultant can build the structure in under one hour.

- Tag proposals by industry, problem type, engagement size, and outcome — four fields unlock the compound value of the archive.

- Win/loss patterns visible in a library of ten proposals are enough to rewrite scope framing and close more work.

What is a consulting proposal library and why does it matter?

A consulting proposal library is a structured archive of every proposal a solo consultant has sent — tagged, searchable, and organized so that each new pitch draws on proven language, winning proof blocks, and tested scope framing rather than blank-page writing.

It converts the institutional knowledge trapped in sent-mail folders into a compounding competitive advantage. A library of ten proposals, properly tagged, gives a solo consultant faster drafting, better pricing data, and a live record of what actually closes work.

CORE COMPONENTS:

- Proposal archive — every sent proposal stored in one searchable system, not scattered across email and desktop folders

- Tagging taxonomy — industry, problem type, engagement size, and outcome fields that make retrieval instant

- Proof block vault — pre-written case summaries organized by relevance, pulled directly from won proposals

- Scope and pricing log — a record of what tiers were proposed, at what price points, and what happened

- Win/loss signal layer — outcome data that surfaces which proposals won and why, turning the archive into a learning system

Every proposal a solo consultant has ever sent is a data asset — and most of them are buried in sent-mail folders, generating zero return. The language that framed a problem clearly, the scope that a client signed without negotiation, the proof case that transferred confidence — all of it exists, already written. What is missing is the system that makes it retrievable and reusable.

Sana, an independent strategy consultant billing $200 per hour, has sent more than forty proposals over three years. Her best proposals — the ones that closed without friction, the ones where the scope fit perfectly, the ones where the client said "this is exactly what we need" — are closed email threads and old PDFs. She cannot find them when she needs them. She cannot identify which proof cases win in which industries. She has no record of what price points held and which stalled. Each new proposal starts from zero because the archive does not function as an asset.

A proposal library solves this problem directly. It takes the archive that already exists and turns it into infrastructure — a retrieval system, a pricing database, a proof vault, and a learning mechanism in one structured tool. The investment is under one hour to build the initial structure. The return compounds with every proposal added.

What is a consulting proposal library and why does it compound in value?

A consulting proposal library is a structured database of every proposal a solo consultant has sent, organized so that each new proposal draws on the full archive rather than starting from scratch. It is not a folder of PDFs — it is a tagged, searchable system that surfaces the right reference material at the moment of need.

The compounding mechanism is specific. Each proposal added to the library increases the value of every future proposal retrieval. The fifth proposal in the archive is marginally useful; the twentieth is significantly more useful; the fiftieth produces near-instant drafting for any familiar problem type. This is the same compounding logic that makes knowledge management for independent consultants a high-leverage investment — assets built once deliver return repeatedly.

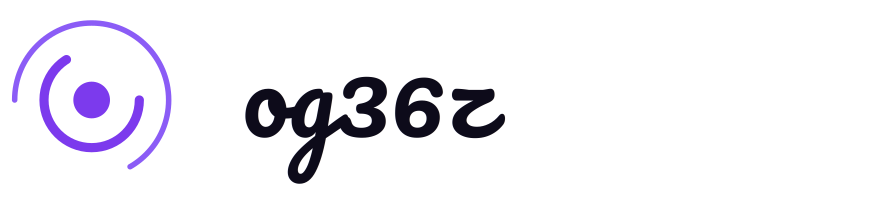

The value compounds across four distinct dimensions:

Speed. A consultant with a tagged proposal library does not write proof blocks from scratch. She retrieves the block most relevant to the current buyer, adapts the client-specific details, and drops it in. A task that takes forty minutes without a library takes eight minutes with one. Across ten proposals per year, this recovers more than five billable hours annually.

Scope quality. A proposal library captures which scope configurations were accepted without negotiation, which required modification, and which were rejected. After ten proposals, scope design is no longer guesswork. The consultant knows which tier lands with mid-size manufacturing clients and which stalls with distributed-decision technology companies.

Pricing confidence. Pricing is one of the highest-anxiety decisions in a solo practice. A proposal library that records what price points held across different engagement types converts anxiety into evidence. The consultant does not have to estimate — she has data on what the market has accepted.

Win/loss intelligence. Outcome data in a proposal library surfaces patterns that would otherwise be invisible. If three of the last four losses happened on proposals where scope was presented before proof of capability, the structure is wrong — not the price. Only a library that records both content and outcome can surface this signal.

The library does not require a large archive to deliver value. Ten proposals with complete tagging reveal more actionable patterns than forty proposals in an unstructured folder.

How do you structure a proposal library in Notion or Airtable?

A proposal library in Notion or Airtable is structured as a single primary database with one record per proposal sent, plus a linked secondary database for reusable proof blocks. This two-database architecture separates the archive (full proposals with outcome data) from the vault (extractable, reusable components).

Both Notion and Airtable support this architecture. The practical difference is in retrieval: Airtable's filter and grouping system is faster for a consultant who needs to slice the archive by multiple criteria simultaneously; Notion's linked database and gallery views are more useful for building the proof block vault as a visual, browsable library. Either tool works — the structure matters more than the platform.

The primary database: the proposal archive.

Each record in the proposal archive represents one sent proposal. The core fields are:

- Proposal title (client name + engagement type)

- Date sent

- Industry

- Problem type

- Engagement size (scope tier)

- Estimated value

- Outcome (won / lost / stalled / withdrawn)

- Linked proof blocks used

- One-line notes on the result

The full proposal document is attached to the record or linked from the record. The record itself does not contain the full text — it is the index entry that makes the full text findable.

The secondary database: the proof block vault.

Each record in the proof block vault is a 100-to-150-word case summary. Fields are:

- Industry

- Problem type

- Outcome achieved

- Source proposal (linked to the archive)

- Last used date

When building a new proposal, Sana filters the vault by industry and problem type, reads three to four relevant blocks, selects the two most credible, and drops them into the proof section. No writing required. No search through email threads required.

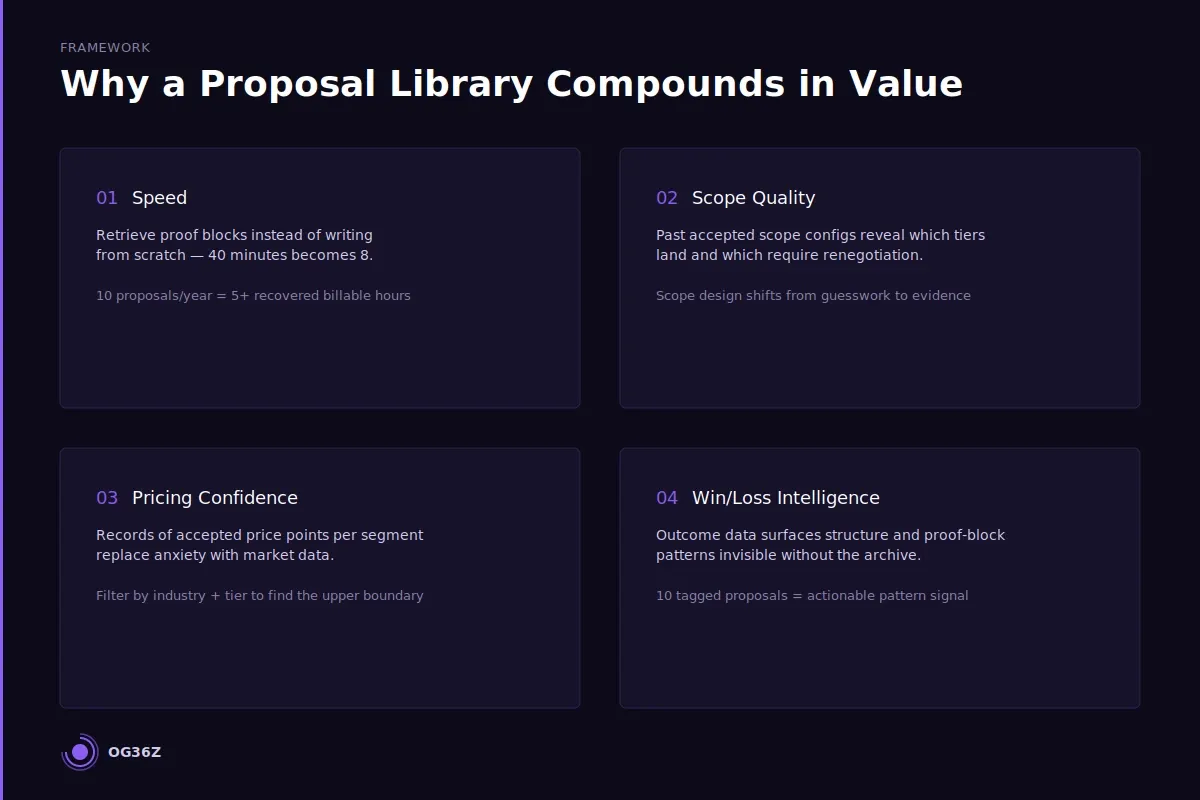

Building the structure in under one hour.

The setup sequence for a new library is: create the two databases, define the field taxonomy (see next section), import the ten most recent proposals as records, extract one proof block from each won proposal, link the blocks to the archive records. This populates the library with enough signal to be immediately useful. See How to Build a Proposal System That Closes More Work as a Solo Consultant for the five-component system this library sits inside.

What should you tag and categorize in a proposal library?

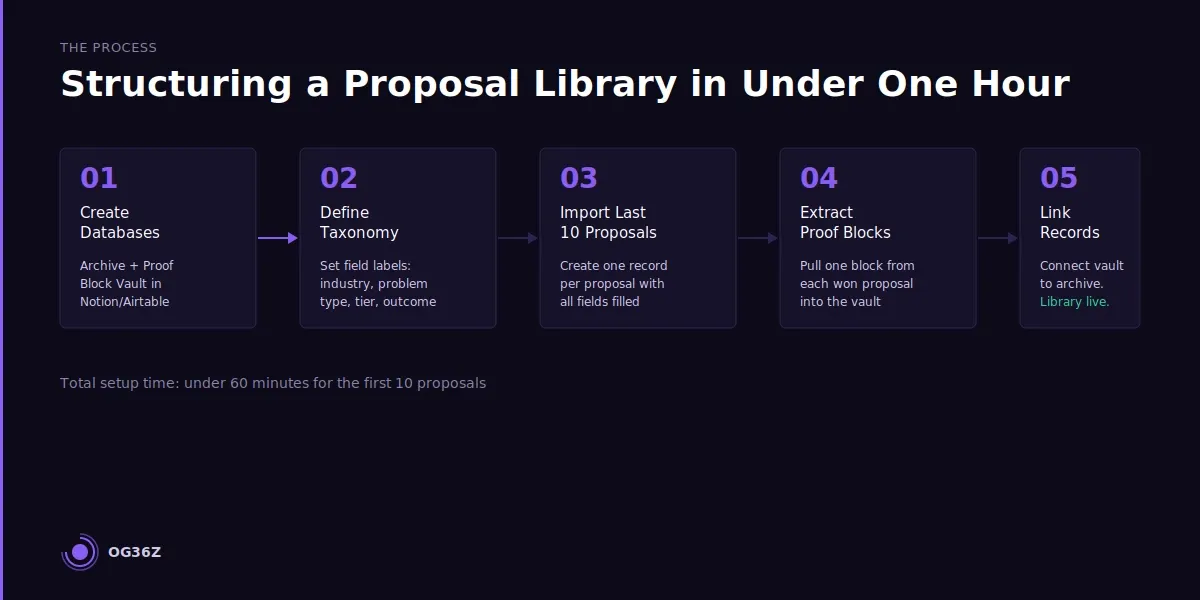

A proposal library should tag four primary fields — industry, problem type, engagement size, and outcome — plus two secondary fields: decision-maker role and proposal stage at loss. These six fields are sufficient to generate actionable patterns from a library of ten to twenty proposals.

Four primary tags:

Industry. The most important retrieval field. Proof blocks are industry-specific — a case study from manufacturing does not transfer credibly to a healthcare buyer without significant reframing. Industry tags allow instant filtering to the relevant proof cases. Use a controlled vocabulary: three to six industry labels that match the consultant's actual client base. Do not use SIC codes or overly granular sub-sectors — the library is for speed, not precision segmentation.

Problem type. The second retrieval field for proof blocks. Problem types are the recurring challenge categories that cross industries: operational efficiency, organizational change, technology implementation, revenue growth, cost reduction, strategic planning. A single industry tag plus a single problem type tag reduces the vault from forty blocks to two or three — precisely the retrieval speed the library is designed to produce.

Engagement size. The scope tier selected for the proposal. This field is what makes pricing patterns visible. Over time, the library reveals which tiers are most commonly accepted by which buyer profiles — and which tiers consistently stall or require renegotiation.

Outcome. Won, lost, stalled, or withdrawn. This is the signal field that turns the archive into a learning system. Without outcome tagging, the library is a reference tool. With outcome tagging, it is a diagnostic — every pattern that emerges from the archive is directly connected to a business result.

Two secondary tags:

Decision-maker role. The seniority and function of the primary decision-maker. Proposals presented to CEOs of ten-person companies close differently than proposals presented to VP-level buyers at mid-size enterprises. This tag surfaces buyer-profile-specific patterns that the primary four fields miss.

Proposal stage at loss. For lost proposals, the stage at which the engagement ended: no response, objection at scope, objection at price, timing issue, or lost to competitor. This field is the highest-leverage diagnostic in the library. See How Independent Consultants Can Write Proposals in Half the Time for how stage-at-loss data drives structural improvements.

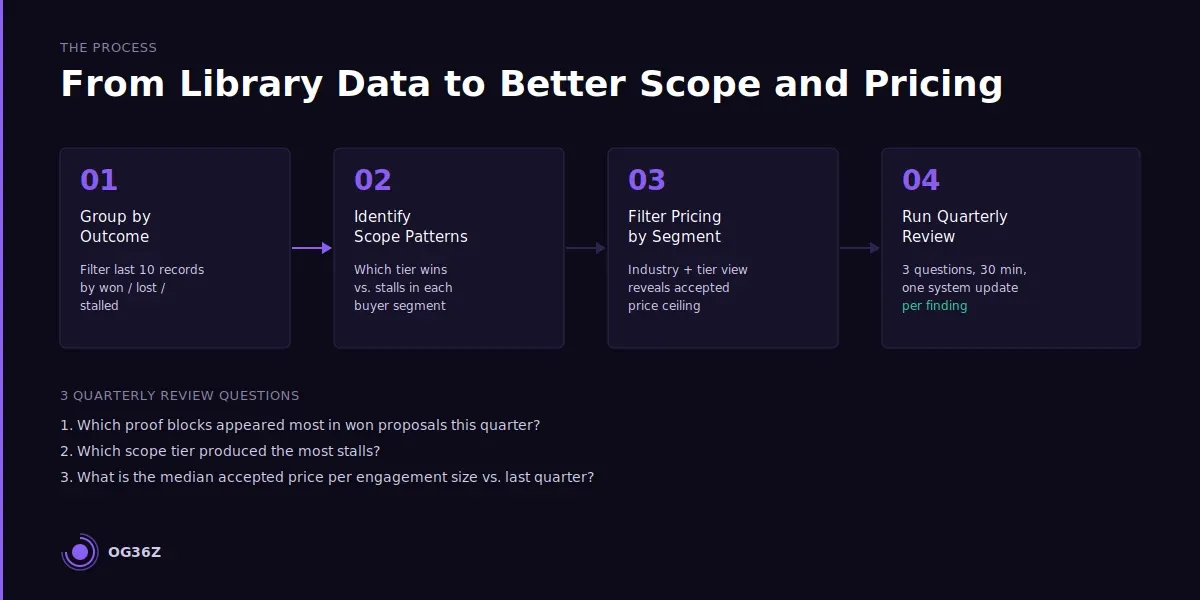

How do you use a proposal library to build better scope and pricing over time?

A proposal library builds better scope and pricing over time by making the outcomes of past decisions visible and connecting them directly to the variables that drove those outcomes. The mechanism is pattern recognition on actual data rather than intuition about what should work.

Scope improvement from library data.

After ten proposals, group the records by outcome and compare scope tier selection. The patterns that typically appear: one tier produces a disproportionate share of wins; another tier consistently generates scope renegotiation before signing; a third is proposed but rarely closed at the stated price. These patterns are not visible without a library — they exist in memory as vague impressions. In a tagged database, they are filterable facts.

The correction is direct. The tier that consistently generates scope renegotiation is too broad — it contains deliverables that the buyer does not value enough to pay for at the stated price. Either the scope is narrowed to remove the drag components, or the price for the full scope is reduced to reflect buyer behavior. Both corrections improve close rate on the next cycle.

Pricing improvement from library data.

A proposal library is the only accurate pricing reference a solo consultant has access to. Market rate surveys and peer comparisons describe general ranges; the library describes what this consultant's specific buyer profile has actually accepted. The difference is significant.

Filter the library by industry and engagement size. Group by outcome. The price points associated with won proposals in a specific segment define the upper boundary of what this market accepts at this scope level. Price points associated with stalled or lost proposals indicate where friction appears. This analysis takes fifteen minutes with a filtered Notion or Airtable view — and it produces pricing decisions grounded in evidence rather than anxiety.

Quarterly library review.

A thirty-minute quarterly review of the library extracts the maximum available signal from each cycle. Three questions drive the review: Which proof blocks appeared in the highest proportion of won proposals? Which scope tier produced the most stalls in the last quarter? What is the median accepted price per engagement size category this quarter versus last quarter? Each answer generates one system update — a proof block promoted to default status, a scope tier revised, a pricing anchor adjusted.

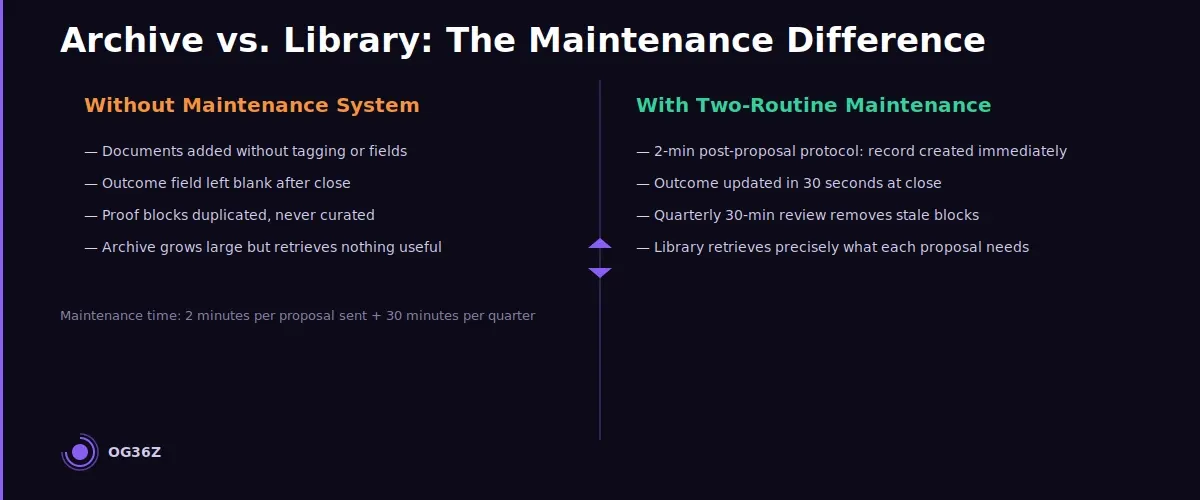

How do you maintain a proposal library without it becoming a dumping ground?

A proposal library avoids becoming a dumping ground by building maintenance into the proposal workflow rather than treating it as a separate administrative task.

The specific mechanism is a two-minute post-proposal protocol run immediately after every sent proposal and a thirty-minute quarterly review. These two routines are sufficient.

The failure mode of most consulting archives is that they become passive storage: documents added without tagging, records created without outcome updates, proof blocks duplicated without curation. The resulting archive is large but unsearchable — it contains everything and retrieves nothing useful.

The post-proposal protocol (two minutes after sending):

- Create one record in the proposal archive with the required fields filled: client name, date, industry, problem type, tier, estimated value. Leave outcome blank.

- Attach or link the sent proposal document.

- If a new proof block was written for this proposal, extract it and add it to the proof block vault with industry and problem type tags.

This two-minute step is the entire maintenance burden for each proposal. It requires no review, no curation, no synthesis. It simply ensures the record exists when the outcome is known.

The outcome update (thirty seconds after close):

When a proposal is won, lost, stalled, or withdrawn, update the outcome field. For losses, add one sentence in the notes field capturing the reason stated or inferred. This single data point is what makes the quarterly review valuable.

The quarterly review (thirty minutes, four times per year):

Filter the library by the last quarter. Review outcome distribution. Identify the highest-frequency proof blocks in won proposals. Identify the scope tier with the highest stall rate. Update proof block default status if patterns have shifted. Archive any proof blocks that have not been used in twelve months.

The quarterly review is the curation step that prevents accumulation. Any proof block that has not appeared in a won proposal in the previous year is either retired or flagged for rewrite. Any scope tier that has not closed a proposal in six months is reviewed against pricing data and buyer feedback.

The library maintains itself as long as the two routines are consistent. Inconsistency — skipping the post-proposal protocol, delaying outcome updates — is the only real threat to the system's integrity.

Summary

A consulting proposal library is the highest-leverage asset a solo consultant can build from work already completed. Every sent proposal contains reusable proof language, validated scope configurations, and pricing data — the library is the system that makes this value retrievable.

The structure is two databases in Notion or Airtable: a proposal archive with six tagged fields and a linked proof block vault. Maintenance requires two minutes per proposal sent and thirty minutes per quarter.

The library compounds in value with every record added: faster drafting, better scope design, evidence-based pricing, and win/loss intelligence that improves the entire proposal system over time.

The consultant who builds this library stops reconstructing institutional knowledge on every pitch — and starts deploying it.